GSA SER Verified Lists Vs Scraping

The Foundation of Successful Automation

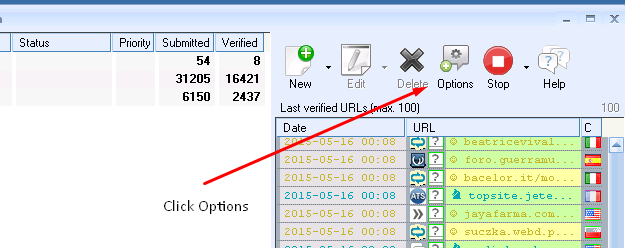

Every seasoned GSA Search Engine Ranker user eventually faces a critical decision that defines the efficiency and safety of their campaigns. The choice between importing pre-verified target lists and scraping fresh URLs on the fly is more than just a workflow preference. It directly impacts domain authority, spam scores, and the sheer number of live links you can build per hour. Understanding the differences outlined in the debate around GSA SER verified lists vs scraping is essential if you want to move beyond random blasts and into strategic link building.

What Are Verified Lists Really Offering?

A verified list is a curated database of URLs that have already been tested. Someone—or more likely a sophisticated software pipeline—has already checked that these targets allow registration, accept content, and most importantly, that the posted links actually go live. This is not just a list of sites; it’s a collection of proven opportunities. The primary allure is the elimination of the identification phase. You skip the part where GSA SER has to hammer a domain to find the login page, detect the platform, and learn the form fields. Instead, the engine goes straight into creation mode.

High-quality verified lists are often segmented by platform type, such as WordPress comments, wiki edits, or guestbook signatures. They come with pre-filled engine configurations, meaning the success rate spikes dramatically. For newcomers, this shortcut can feel like a superpower. However, the market is flooded with outdated or overused lists. A list that worked last month might contain domains that have since cleaned up their act, deployed aggressive anti-spam, or simply gone offline. The public nature of many verified lists means your competitors might be targeting the exact same footprints, leading to rapid link devaluation.

The Mechanics of Real-Time Scraping

Scraping, on the other hand, is a live discovery process. GSA SER uses its built-in search engine parsers to query Google, Bing, and other sources for keywords combined with specific footprints. It then harvests the resulting URLs, filters them against your blacklists, and tests them for posting capability in real time. No human pre-selection occurs. The advantage here is freshness. You are constantly finding brand-new domains that have never been hit by automated tools, and you can pivot your strategy mid-campaign by simply changing your search queries.

The downside is resource consumption. Scraping requires proxies, uses threads, and can trigger captchas and IP bans very quickly if not throttled properly. A significant portion of scraped URLs will be dead ends: sites that load endlessly, request forms that GSA SER can’t parse, or click here use JavaScript challenges that the engine cannot solve. This means your success rate per imported URL is often much lower than with a verified import. Evaluating GSA SER verified lists vs scraping forces you to weigh guaranteed efficiency against the potential of discovering untouched, high-trust seed domains.

Domain Quality and Footprint Diversity

The underlying risk of leaning too heavily on verified lists is footprint uniformity. If a thousand SEOs buy the same list of 50,000 auto-approve domains, Google’s algorithms have a very clean signal to penalize. Those URLs become a cluster of obvious spam nodes. Scraping dynamically allows for massive diversity. You can target low-competition niches by using long-tail anchoring keywords during the scraping process, uncovering sites that are deeply buried and have no association with bulk link sellers.

On the flip side, scraping without proper filtering can drag your project’s domain authority into the gutter. A live search might return domains registered three minutes ago, parked pages riddled with malware, or entire subnets blocked by Google. A meticulously maintained verified list often undergoes key metric checks, filtering only domains with a certain Trust Flow or Citation Flow, which provides a safety net that pure scraping lacks.

When Speed and Scalability Dictate the Choice

If you are building a tier-one PBN buffer or need links with a near-zero spam score, neither raw scraping nor bulk verified lists are ideal—you’d be doing careful manual curation. But for tier-two and tier-three campaigns, the raw numbers matter. A 1-million-target verified list can allow GSA SER to run non-stop for days without needing to wait for search engines to return results. This bypasses all API limitations. Scraping, conversely, is often bottlenecked by how many searches your proxy pool can handle per minute before getting captcha-locked. In a straight shootout of GSA SER verified lists vs scraping, verified imports almost always win in terms of raw posting velocity once the engine starts running, simply because the identification work is already done.

Cost Analysis and Long-Term Viability

Fresh, private verified lists maintained by reputable sources are a recurring expense. You pay monthly because a static list decays rapidly. Scraping, while seemingly free, consumes credits or proxy bandwidth that also costs money. The hidden cost of scraping is the wear on your tools and the time wasted on non-posting URLs. For many advanced users, the ultimate workflow is a hybrid model: buying a small, extremely high-quality verified list to seed the engine and keep it busy, while simultaneously running scraping tasks on low-priority threads to constantly inject new blood into the pipeline.

This approach ensures your project does not become fossilized. The verified lists provide a stable baseline of links per minute, while the scraping module slowly uncovers out-of-the-way forums, new blogs, and obscure CMS targets that your competitors haven’t poisoned yet. The conclusion of any deep dive into GSA SER verified lists vs scraping is not about declaring one method obsolete; it is about recognizing that they solve different stages of the automation funnel. The engine performs best when the brute force of pre-verified targets meets the adaptive discovery of intelligent scraping, all governed by a strict set of filtering rules that prioritize long-term SERP survival over a temporary spike in comments.